Pilot reports from the Creative Destruction Lab’s Machine Learning and the Market for Intelligence conference

As a partner of the Creative Destruction Lab, a seed-stage tech incubator, part of our job includes developing the website and the conference materials for CDL’s annual Machine Learning and the Market for Intelligence Conference. We attended the third-annual conference this year, and between panic attacks over the impending robot takeover, gleaned the following insights.

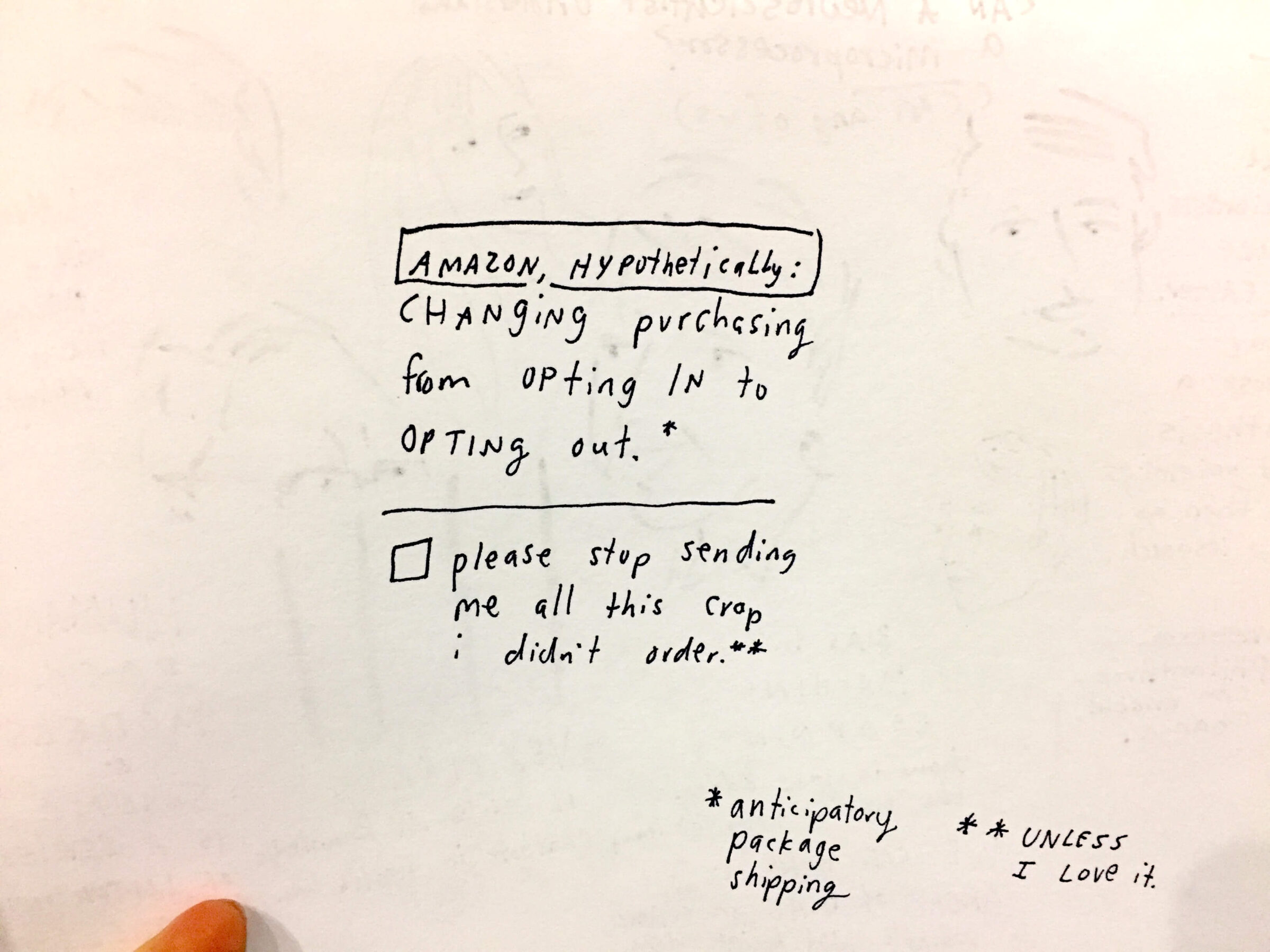

Professor Ajay Agrawal set the tone for the meeting by offering up time as a metaphor for how AI will affect business. He used the rate of prediction accuracy as a way of rendering the concept understandable. Right now, we buy one out of every 20 things the Amazon Prediction Engine recommends to us. What happens when the algorithm improves so much that Amazon feels comfortable sending us products before we even order them?

Richard Sutton — one of the founding fathers of reinforcement learning, a key component in many modern AIs — creatively destroyed the basic terminolgy we use by arguing that the term Artificial Intelligence is inaccurate. According to him, the level of intelligence and awareness in machines will soon be just as “real” as us.

MIT professor and co-founder of the Future of Life Institute Max Tegmark took these ideas even further. He suggested that it’s time to start developing laws to protect the rights and freedoms of AIs, as well as to outlaw their use as weapons and focus their role on the common good. His focus on rights and the common good brought to mind how poor we’ve been at protecting the rights and freedoms of humans. Which brings up one of the underlying issues of AI’s promise: is it really going to fix things, or do we have a lot more to fix before we go messing around with it?

The practical applications

Aside from predictions and philosophy, pragmatic industry insights were also on offer. We learned about optimizing machine learning systems by combining human insight with AI power. Rotman School of Business professor Joshua Gans identified a skills gap in the industry, noting that current software engineering methodology needs to be rethought. Anyone worried about career prospects might be happy to hear that AI Product Manager could be a hot job in the next few years.

Meanwhile, Ben Goertzel introduced his idea SingularityNet, a blockchain-powered marketplace where AIs can collaborate in an open, decentralized way. His hope is that a more free platform can keep AIs from being monopolized by governments and massive corporations.

With the combination of machine intelligence and quantum computing, we are nearing the creation of an intelligence that far surpasses our own. Despite the fact we engineered it, though, we don’t fully understand how it works. The overarching sense from the conference, though, was that the risks of creating a super intelligent entity aren’t as much of a concern as the intentions of the people that own it.

As it happens, most of this technology is getting bought up by large tech companies and the US military.